AI: From Experimentation to Production

Dan Moskowitz Feb 10, 2026

A November 2025 McKinsey survey, which was conducted across thousands of participants in >100 countries across many industries and functions, showed that the percentage of respondents using AI in at least one business function has steadily grown year over year, from ~55% in 2023 to ~88% at the end of 2025. However, of the 88% who are using AI, only ~38% have either deployed to production or are in the process of doing so, while the remaining ~62% are still piloting or experimenting with small use cases. Among all survey respondents, the vast majority expect organization-wide AI adoption to increase over the next three years.1

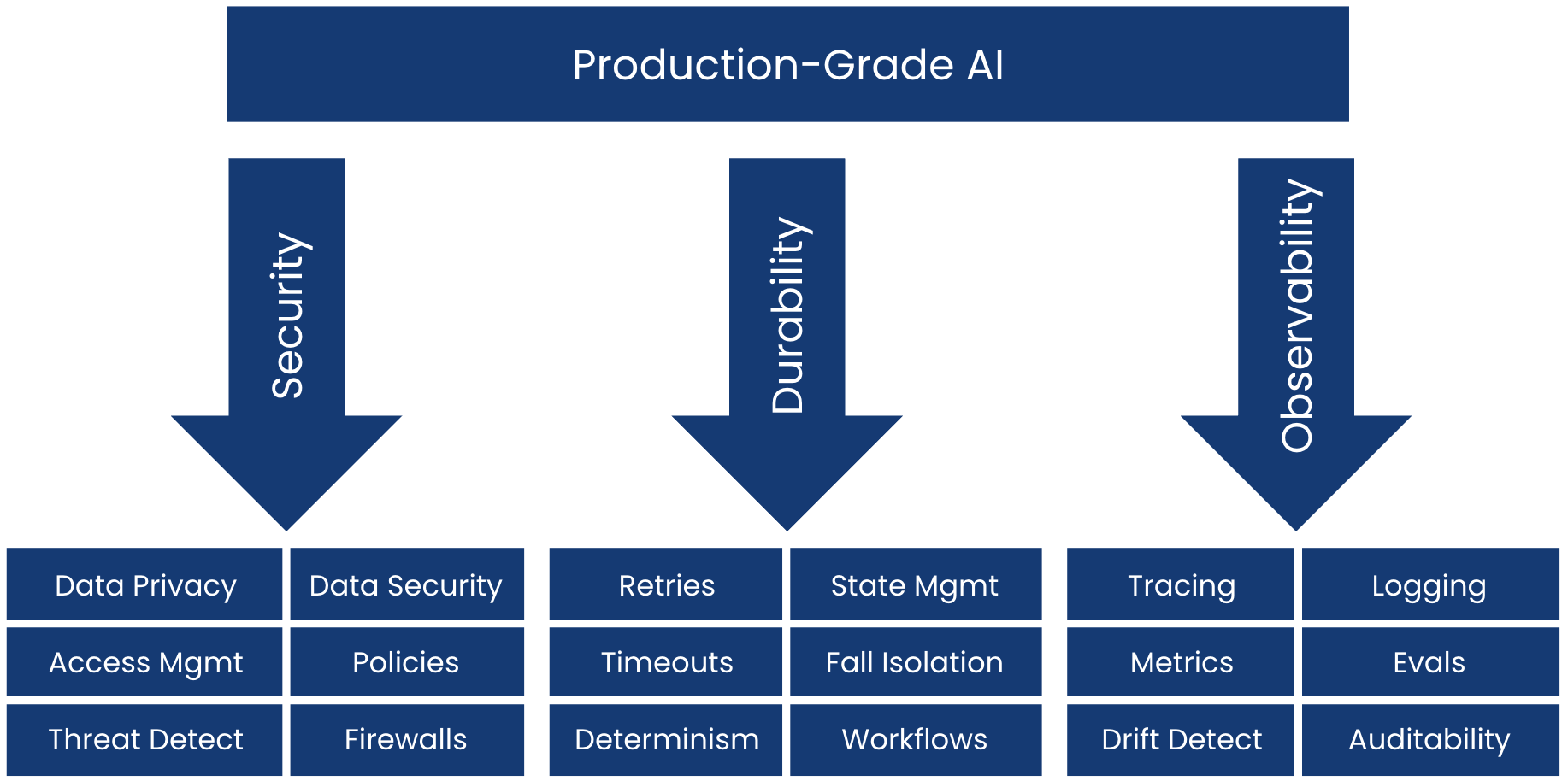

There exists a gap between practitioners’ expectations and hopes for AI and the current reality of the scope of their deployments which are mostly still in pre-production. Why does this gap exist? You will find multiple answers to that question, but mostly they fall into what we call the “three legs of the AI production stool:” Security, Durability, and Observability.

Source: Third Point Ventures analysis.

In our view, without solving each of the legs of the stool, it is impossible to confidently scale an AI application to production, especially for mature organizations with higher business risk. Can a $b+ run-rate enterprise afford to release an AI application to its own customers, powered by models that the organization doesn’t own, processing private customer data in ways that it can’t see? What about scaling an IT application supporting a global network of employees, which has model dependencies that may cause the entire IT network to crash – and with no way of seeing it coming?

Today, most business leaders are already convinced of the value that properly implemented AI automation can unlock in the organization. Security, durability, and observability represent the last mile to getting AI productive at scale.

Security

Big Picture:

Where is AI being used?

What data is being processed? Where? How?

Who can access what?

Can you create and enforce policies?

Can you defend against unique attacks against your AI systems (such as prompt injection)?

Can you audit everything?

These are the questions that security teams are grappling with in AI, even if the business units have already been experimenting with AI for some time. If you can’t answer these, then you can’t be sure that your usage of AI is compliant with the rest of your security policies, and you can’t be sure that you aren’t increasing your attack surface. You certainly can’t deploy your AI projects into production without strong procedures in place to address these questions. Forward-looking CISOs have been thinking about AI risks for some time now, but it was not a popular opinion reflected where budgets were being spent. That is rapidly changing. According to the World Economic Forum’s (“WEF”) 2026 annual survey, 64% of responding security professionals have already adopted processes to assess the security of AI tools prior to deployment – compared with only 37% this time last year.2

Consider the recent high-profile exploit found in Perplexity’s Comet AI browser. It was released to the public on July 9, 2025. Weeks later, security researchers from Brave discovered a vulnerability with potentially catastrophic implications for users: Comet did not differentiate between intentional user prompts and website content. Researchers noted that they could hide malicious prompts in web content to trick Comet into executing attacks against its users. For example, they created a Reddit post instructing Comet to retrieve a user’s one-time password from Gmail, effectively allowing them to hijack that account. It is phishing against an AI; you don’t need to trick the user into clicking a link. This is a new attack vector against a new production AI system. This vulnerability was reported to Perplexity on July 25. Brave noted that Perplexity was still unable to fix the vulnerability by August 20, despite several patches, highlighting how difficult it can be to fully safeguard these systems with novel attack paths.3

From the same 2026 WEF survey referenced earlier, the biggest AI security concern among professionals is sensitive data leakage. This is such an important concern to security practitioners that it ranks even higher than AI-powered offensive threats explicitly designed to attack organizations. The bedrock of solving AI data leakage is system visibility. If you don’t know where and how AI is being used, then you don’t know what to secure. This is a pre-production concern too, to the extent that sensitive data can also be fed to a pre-production AI system during training and experimentation. Think: old customer records used to fine-tune a model prior to release for live customers. What is fundamentally different about the risk in production is not the type of risk but the blast radius of a failure due to the larger scale of data that is potentially ingested in a live system interacting with new data 24/7.

We at Third Point Ventures have invested in multiple companies on the cutting edge of answering many of these questions:

Data discovery, processing, and privacy: DataGrail surfaces and resolves privacy and compliance risks (AI and non-AI) across your tech stack and third-party vendors. It flags AI subprocessor usage and high-risk AI usage patterns, scores those risks based on sensitive data categories and affected data subjects, and integrates them into a centralized risk register for prioritization and mitigation. The platform also orchestrates data subject access, opt-out, and deletion actions across internal and third-party systems, helping teams operationalize privacy rights across AI and non-AI processing. These capabilities are increasingly important as regulations like the GDPR, CPRA (and 20+ other state regulations), and emerging AI-specific rules expand expectations for visibility, accountability, and risk management in AI systems.

Policy creation and threat defense: Zenity is a security and governance platform for AI agents operating across cloud, SaaS, and custom platforms in production. As agents gain autonomy, access, and the ability to take action, security risk shifts from static configuration to runtime behavior. Zenity enables teams to define and enforce guardrails over agent configuration, permissions, and tool usage, while continuously discovering agents across the environment. Its AI Detection and Response capabilities monitor agent behavior in real time to identify risks such as prompt injection, data exfiltration, and misuse of tools, and trigger automated response actions. Zenity’s core focus is maintaining visibility and control over what agents do and what they access, which is foundational for operating agentic AI systems safely at scale.

Durability

Big Picture:

Will my AI workflows keep working in real-world conditions?

What happens when an API fails?

What happens when one agentic call in the chain times out?

What happens if a single hallucination propagates errors further in the chain?

What if a long-running workflow dies silently?

These are the questions that start to matter when an agentic workflow graduates from cool experiment to customer-facing. These are the same considerations given to non-AI workflows, but how you achieve durability in an agentic system is somewhat different.

Consider a high-stakes application such as in financial services. In January 2025, Barclays faced a three-day outage in the UK that resulted in ~56% of customer payments failing to transact. This effectively locked millions of Barclays customers out of access to their own money and left them unable to make payments. Unfortunately, this outage began on January 31, which is the deadline for UK tax payments, which had a particularly painful impact to a portion of those affected customers. This outage was expected to cost Barclays up to £7.5M in compensation – all for a partial outage over three days.4 According to research from Splunk and Oxford Economics, the total loss to financial services organizations due to downtime may be on the order of ~$152M.5

In non-AI systems, outages can broadly be attributed to “Things you can’t control” and “Things you can control.”

Things you can’t control: third-party dependency failures (cloud provider; hosted database; third-party APIs)

Things you can control: poor configurations; environment changes (“it worked on my machine but not on yours”); bugs that you introduce

What is so insidious about agentic workflows is that the list of “Things you can control” stays the same, but the “Things you can’t control” is much bigger because you are relying on model providers that you don’t own. In addition to the list of third party dependencies above, you must also consider the impact of: model API outages; hitting the rate limit for your model subscription; hallucinations; and generally, models going off-script in some wildly non-deterministic way that you can’t fully control.

In a multi-step workflow, a failure in any individual node in the chain can cause downstream problems if not total outage. In fact, a downstream hallucination may be more catastrophic than a total outage (if you are a bank, would you rather your payments app go dark for 24 hours, or send random payments to random customers for 24 hours?). It is for this reason that workflow durability and resiliency is so critical for AI applications running on live data, whereas it is less important during experimentation. This is where many regulated industry applications specifically may find it challenging to promote their applications to production before fully getting a handle on these issues.

Third Point Ventures portfolio company Diagrid focuses on making agentic workloads reliable in production. Its Catalyst platform handles the critical work of making AI agents survive failures and outages of any kind by persisting state, coordinating retries and recovering execution, so long running workflows do not lose data or context. Users can bring any agent framework and immediately get 99.99% uptime for all of their agents, including visibility into how workflows are actually executing, such as: what steps ran, where failures occurred and why; and how recovery played out. You can’t reliably operate workflows that you don’t understand. A useful side effect of this abstraction is that teams can change or swap underlying LLM providers without rewriting application logic to re-implement state management and failure handling, which is particularly valuable as models and providers continue to evolve quickly.

Observability

Big Picture:

Is anything silently changing under the hood (e.g. model changes, which I may not control, impacting performance)?

Is performance drifting over time?

Context rot – are my outcomes changing as I add or delete context?

Prompt rot – are my outcomes changing even though my prompts stay the same?

Solving the security and durability gaps might give an organization the confidence to deploy AI to production because it means the application is unlikely to cause catastrophic failure today. If you want to be sure that your models don’t break eventually, then you need ongoing observability and evaluation.

One of the biggest causes of model performance degradation is drift that occurs because real-world input data eventually looks different from the data populations that the models were trained on. Model drift might be obvious in some cases; in other cases, it might be small, insidious, and unable to be seen by the casual observer, until the model does something disastrous. The way to catch these accumulated problems that happen over time in the real world, is with monitoring tools that constantly compare the model’s output to the expected value as well as the distribution and quality of the input data in production.

This discussion has so far focused on the applications themselves, but a silent killer of model performance can also come from the network infrastructure layer. Latency, outages, misrouting, and other classic network degradations that can cause traditional applications to fail can also cause behavioral degradation in models. Imagine a complex chain of agents where network latency causes partial or delayed context in a dependency in the chain. That might create issues downstream that are tough to catch because the task does complete, but it does a poor job with worse context, since a tool call here or there silently failed.

Third Point Ventures portfolio company Kentik provides the telemetry collection, correlation, and AI-assisted insight into the network to help prevent these outages across hybrid and multi-cloud environments. If you can catch performance anomalies, understand traffic patterns, and troubleshoot issues before they result in application failure, then you can prevent the network from silently killing your agentic workflows.

Looking Ahead

There is a big gap between the number of enterprise teams experimenting with AI in one or two isolated pilots versus the enthusiasm for scaling these trials in the coming years. The requirement to allow AI to permeate the enterprise stack is no longer proving economic value. That is table-stakes now. What will make this market really explode is the adoption of best practices and vendors in security, durability, and observability that allow AI to operate safely and reliably at scale.

We invest in companies seeking to close this gap and are excited to talk to founders who are creating novel solutions to help organizations bring their AI into production.

References

1 McKinsey, "The state of AI in 2025: Agents, innovation, and transformation," November 5, 2025.

2 World Economic Forum, "Global Cybersecurity Outlook 2026: Insight Report," January 12, 2026.

3 Digit, "Comet AI browser hacked: How attackers breached Perplexity’s AI agent," August 25, 2025.

5 Resolve Pay, "5 Statistics Indicating API Downtime's Cost to Finance Operations," August 26, 2025.

Disclaimers

Third Point LLC ("Third Point" or "Investment Manager") is an SEC-registered investment adviser headquartered in New York. Third Point is primarily engaged in providing discretionary investment advisory services to its proprietary private investment funds, including a hedge fund of funds, (each a “Fund” collectively, the “Funds”). Third Point also manages several separate accounts and other proprietary funds that pursue different strategies. Those funds are not the subject of this presentation.

Unless indicated otherwise, the discussion of trends in the above slides reflects the current market views, opinions and expectations of Third Point based on its historic experience. Historic market trends are not reliable indicators of actual future market behavior or future performance of any particular investment or Fund and are not to be relied upon as such. There can be no assurance that the positive trends and outlook discussed in this topic will continue in the future. Certain information contained herein constitutes "forward-looking statements," which can be identified by the use of forward-looking terminology such as "may," "will," "should," "expect," "anticipate," "project,“ "estimate," "intend," "continue" or "believe" or “potential” or the negatives thereof or other variations thereon or other comparable terminology. Due to various risks and uncertainties, actual events or results or the actual performance of the Fund may differ materially from those reflected or contemplated in such forward-looking statements. No representation or warranty is made as to future performance or such forward-looking statements. Past performance is not necessarily indicative of future results, and there can be no assurance that the Funds will achieve results comparable to those of prior results, or that the Funds will be able to implement their respective investment strategy or achieve investment objectives or otherwise be profitable. All information provided herein is for informational purposes only and should not be deemed as a recommendation or solicitation to buy or sell securities including any interest in any fund managed or advised by Third Point. All investments involve risk including the loss of principal. Any such offer or solicitation may only be mode by means of delivery of an approved confidential offering memorandum. Nothing in this presentation is intended to constitute the rendering of "investment advice," within the meaning of Section 3(21)(A)(ii) of ERISA, to any investor in the Funds or to any person acting on its behalf, including investment advice in the form of a recommendation as to the advisability of acquiring, holding, disposing of, or exchanging securities or other investment property, or to otherwise create on ERISA fiduciary relationship between any potential investor, or any person acting on its behalf, and the Funds, the General Partner, or the Investment Manager, or any of their respective affiliates. Specific companies or securities shown in this presentation are for informational purposes only and meant to demonstrate Third Point's investment style and the types of industries and instruments in which Third Point invests and are not selected based on past performance.

The companies highlighted in this presentation are for illustrative purposes only. There can be no assurance that a Fund implementing the TPV strategy will make an investment in all or any of the deals or on the terms estimated herein. As a result, this presentation is not intended to be, and should not be read as, a full and complete description of each investment that the TPV strategy may make. The analyses and conclusions of Third Point contained in this presentation include certain statements, assumptions, estimates and projections that reflect various assumptions by Third Point concerning anticipated results that are inherently subject to significant economic, competitive, and other uncertainties and contingencies. There can be no guarantee, express or implied, as to the accuracy or completeness of such statements, assumptions, estimates or projections or with respect to any other materials herein. A broad range of risks could cause actual investments to materially differ from the TPV Deal Pipeline, including a failure to deploy capital sufficiently quickly to take advantage of investment opportunities, changes in the economic and business environment, tax rates, financing costs and the availability of financing, selling prices, regulatory changes and any other unforeseen expenses or issues. Third Point may buy, sell, cover or otherwise change the nature, form or amount of its investments, including any investments identified in this presentation, without further notice and in Third Point's sole discretion for any reason.

Except where otherwise indicated herein, the information provided herein is based on matters as they exist as of the date of preparation and not as of any future date, and will not be updated or otherwise revised to reflect information that subsequently becomes available, or circumstances existing or changes occurring after the date hereof. There is no implication that the information contained herein is correct as of any time subsequent to the date of preparation. Certain information contained herein (including financial information and information relating to investments in companies) has been obtained from published and non-published sources prepared by third parties. Such information has not been independently verified and neither the Fund implementing the TPV strategy nor Third Point assumes any responsibility for the accuracy or completeness of such information.

This presentation may contain trade names, trademarks or service marks of other companies. Third Point does not intend the use or display of other parties’ trade names, trademarks or service marks to imply a relationship with, approval by or sponsorship of these other parties.